Artificial Intelligence (AI) -application and examples

The topic of Artificial Intelligence (AI) is becoming more present every day in all areas of business, industry, education as well as in our private lives. Not a day goes by when we don’t knowingly or unknowingly rely on the power of AI algorithms. This paper presents the most rudimentary basics on Artificial Intelligence, Machine Learning and Deep Learning.

Artificial intelligence in our everyday life

People who do not deal with AI technology in detail, either professionally or privately, have a vague idea of it. This is mainly inspired by popular science fiction movies like “Terminator, ,,Matrix” or “A.I. Artificial lntelligence”. However, AI is now present in far less spectacular and humanoid forms such as spam filters or digital voice assistants like “Alexa” (Amazon) or “Siri” (Apple) and is an essential part of everyday life. For example, AI algorithms are hidden behind every Google query, filtering and categorically presenting the most suitable hits in the almost infinite flow of information on the Internet. Spam filters are another example of the use of AI algorithms that make our everyday lives easier. However, there are also AI applications that sometimes achieve astonishing, but also fatal results, so that the general acceptance of the use of AI algorithms to solve problems in society as well as among authorities is not necessarily present at the moment. A progressive digitalization of all branches of industry in conjunction with steadily decreasing costs of data processing and storage are paving the way for AI in many forms from an academic subject into private and professional everyday life. Due to the immediate signs of intensified research and increasing data volumes, AI algorithms will become an integral part of our daily lives in the medium term, analogous to spam filters. Concrete examples of this vision are the autonomous driving of vehicles or the sustainable and optimized smart cities, which function in particular via AI algorithms of so-called deep learning (DL).

The development of AI

AI is a rapidly growing technology that has now found its way into almost all industries worldwide and is expected to bring about a new revolution due to its data processing capabilities.

The term Artificial Intelligence is an overarching concept first introduced at a conference at Dartmouth University in 1956. The term technology Artificial Intelligence is generally understood to mean the autonomous taking over and processing of tasks by machines under the development and application of algorithms which enable the machine to act intelligently. A very brief yet concise definition of AI (out of many possible) was given by Elaine Rich, who defines AI as follows:

“Artificial intelligence is the study of how to get computers to do things that humans are better at right now.”

AI is used as an umbrella term for all developments in computer science that are primarily concerned with the automation of intelligent and emergent behavior such as visual perception, speech recognition, language translation, and decision making. Over the past 80 years, a number of subfields of AI have emerged. For manufacturing and logistics, it primarily involved machine learning, which initially focused on pattern recognition and later on deep learning using only artificial neural networks. Models and algorithms form essential building blocks for applying AI to practical problems. An algorithm is defined as follows: It is a set of unambiguous rules given to an AI program to help it learn independently from experience (here: data) for a given task (here: the problem under study) under a performance measure (here: the error between Kl prediction and a known ground truth within the data set). The experience is an entire dataset consisting of data points (also called examples). A single data point consists of at least features (single measurable property; explanatory variable).

For specific tasks, targets (dependent variables) can also be assigned to the features. The simplest example of this is a regression task where the task for an Al algorithm is to find optimal model parameters given experience, e.g., using the least-squares performance measure.

Machine Learning

Two different main tasks can be distinguished for machine learning, which are briefly introduced here. While supervised learning aims to develop a predictive model based on both influence and response variables, unsupervised learning trains a model based only on the influence variables (clustering). In supervised learning, a further distinction is made between classification and regression problems. While in the former the response variables can only take on discrete values, in regression problems the response variables are continuous.

Deep Learning

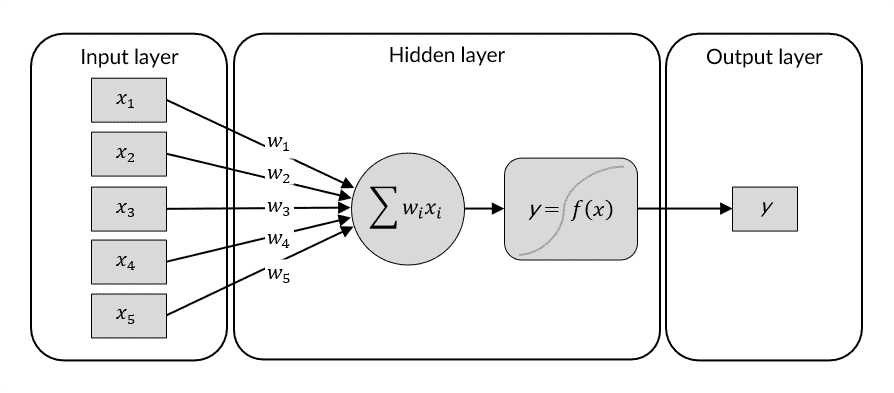

Deep Learning uses Artificial Neural Networks (ANN) to recognize patterns and highly non-linear relationships in data. An artificial neural network is based on a collection of connected nodes that resemble the human brain. Because of their ability to reproduce and model nonlinear processes, artificial neural networks have found application in many fields, such as material modeling and development, system identification and control (vehicle control, process control), pattern recognition (radar systems, face recognition, signal classification, object recognition, and more). Sequence recognition (gesture, speech, handwriting, and text recognition). A ANN is built by connecting layers consisting of multiple neurons, where the first layer of the ANN is the input layer, the last layer is the output layer, and the layers in between are called hidden layers. When a ANN has more than 3 hidden layers, it is called a deep neural network.